I Built a PHP API Without Knowing PHP. That’s a Problem.

4/28/2026

Does learning a programming language even matter anymore?

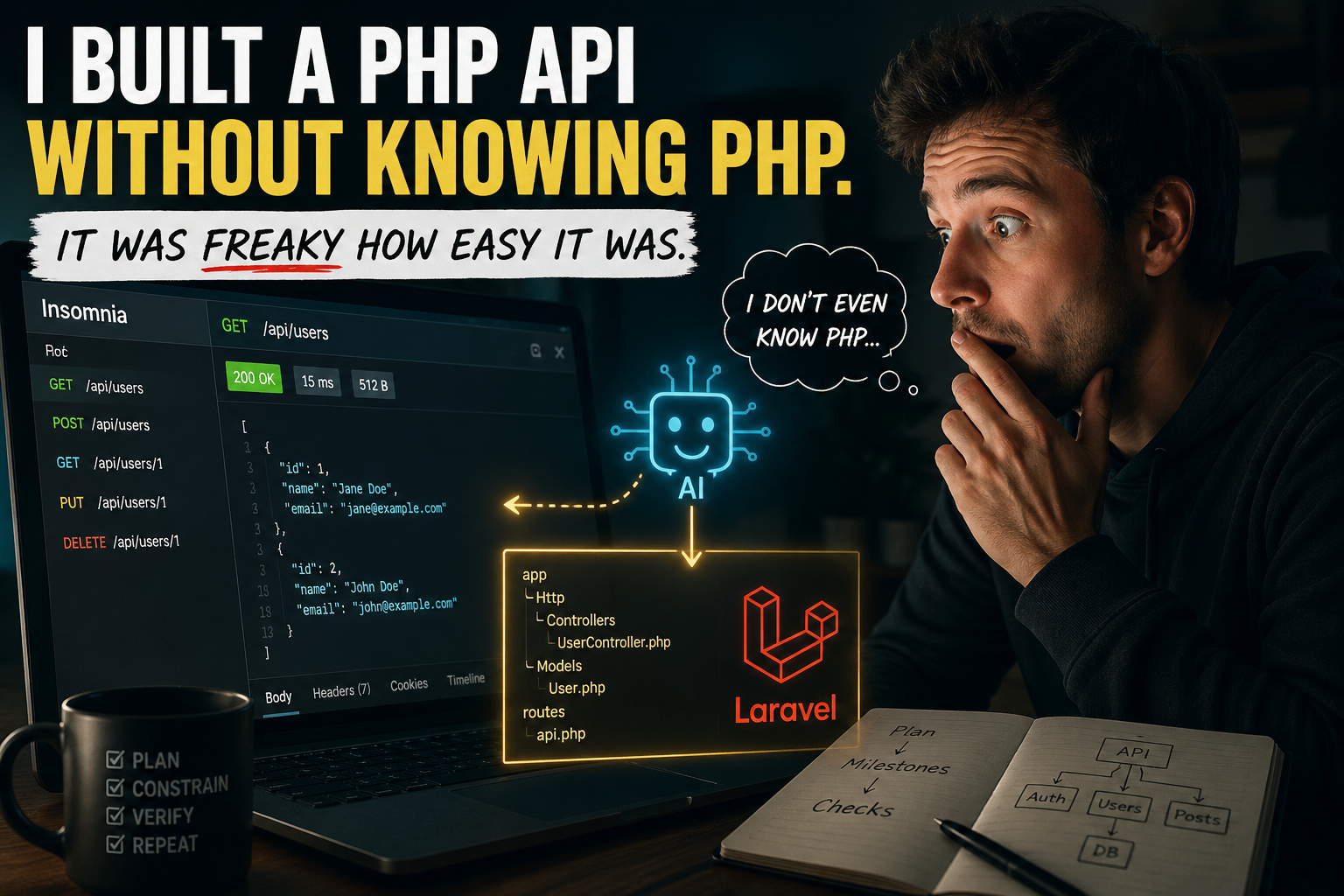

I built an API in PHP without really knowing PHP, and it was honestly freaky how easy it was.

I asked the AI how to start from scratch, followed the steps, opened Insomnia, and started hitting CRUD endpoints.

Boom. Everything worked…wait what? It just…worked?!

I even tried to throw a monkey wrench in by adding a relationship to the underlying model (the part where I usually stumble), and I still ended up with a functioning API.

Here’s the weird part: if you showed me the code right now, I’d have to reverse-engineer it and theorize what it’s doing. And writing the same thing from memory? Ha! Not a chance. I was staring at the working answer, and for a moment it felt like the language didn’t matter. Or did it?

That experience changed how I think about “learning languages” in the age of Cursor and modern models. My current take is not that languages are irrelevant, but that raw syntax mastery is dropping in value. The differentiator is engineering judgment: how you design, constrain, test, and verify what gets built.

This only worked because I treated the AI like a junior teammate, not a vending machine. “Prompting” would have been: generate a Laravel API and accept whatever showed up. “Engineering” was: define the shape first, break it into small milestones, and force each step to earn trust with a real check before moving on.

A quick note on why I even chose PHP: I wanted a language I’ve never seen before, had no working knowledge of, and was totally ignorant of the ecosystem. Still, I assumed a mature language would have mature web/API defaults. I was right.

After ~10 years building backend systems, the transferable part wasn’t a checklist of components. It was the mental model: how requests flow, where bugs hide, what “secure by default” should mean, and what to verify when something seems too easy. That’s what I brought into this experiment.

What surprised me (coming from Spring Boot and .NET)

Laravel was a huge surprise. It’s opinionated like Ruby on Rails: strong conventions, familiar boxes (controllers, models, migrations), and a clear default path. I know Ruby on Rails’ vibe, so I just carried that over to Laravel.

Once the stack was chosen, things moved fast. In ~15 minutes I had a server running, the database connection verified, and a tight loop for iterating.

Why opinionated frameworks make AI better

Opinionated frameworks don’t just help you write code. They constrain the problem space. With a standard layout and a clear “Laravel way,” both you and the model have fewer degrees of freedom, so you get fewer weird detours and more consistent structure.

That’s why I think this experiment would have been harder in a less opinionated ecosystem like Node + Express. When you have to choose the architecture, the patterns, and the library stack, the AI can’t supply “taste.” If you don’t bring strong defaults, you get drift.

In a convention-heavy framework, you don’t have to understand every internal mechanism on day one, as long as you can verify behavior and stay inside the guardrails.

Here’s how I kept the AI useful:

· Plan first (then code). I start with a short plan, ask it to call out edge cases, and lock the scope.

· Critique loop. If I’m in unfamiliar territory, I’ll have a second model critique the plan before I generate code.

· Execute in small milestones. One feature at a time, with constraints, so the architecture doesn’t drift.

· Verification beats vibes. Every milestone needs one concrete check I can run, not just “tests passed.”

· Be strict about reuse. If you don’t ask for shared helpers/mocks, the AI will duplicate code because it’s the fastest path to “done.”

My definition of a “good plan” (and the prompt that keeps AI honest)

A “good plan” is short, scoped, and written in outcomes plus checks. Then I do a quick reality check: cut over-engineering, make sure we’re building one coherent system, and restate what I’m going to verify at the end of each milestone.

One heuristic I like: I’ll ask, “If I add one new feature next week, how much code will I have to rewrite?” If the answer is “not much,” we’re probably on a healthy track.

AI also isn’t magical. It will take shortcuts (like stripping auth “just to test”) or drift from the architecture if you let it. Without active oversight, you still get inconsistent patterns and spaghetti code.

Is coding totally dead? To me the jury is still out. I want to rerun the experiment with a more complex project: heavier business logic, more edge cases, and integrations with third-party APIs. That’s where unfamiliar ecosystems, testing discipline, and system design matter more than “can I write the syntax.” Will my skills still be transferable then, or will my lack of specific knowledge of the framework defeat me in the end?

So what does this mean for junior and mid-level developers? This experiment worked for me because I’ve already done the unskippable stuff: production bugs, on-call nights, weird edge cases, tickets, and the slow pain of learning what breaks in the real world. That experience taught me to be disciplined, to break problems into steps, and to verify behavior instead of trusting vibes.

When you’re early in your career, “learning a language” is mostly just the vehicle for building that engineering brain. Java, Ruby, PHP, it almost doesn’t matter. The point is what your brain learns while you use it: ruthless logic, debugging instincts, how to isolate variables, and how to turn a vague requirement into a sequence of checks.

AI can get you to “it works” faster. It can’t skip the journey that teaches you what to check, what to fear, and what “works” really means.